AI Generated Music: What's The Point?

Who is it actually for?

Generative AI is goddamn everywhere right now.

From your social media feed, to the corporate messaging apps, to software engineers’ code environments, and even in the Google Doc I’m writing this in; these tools are ubiquitous.

And if you haven’t heard of these tools yet, just log into LinkedIn or Twitter and you’re sure to get bombarded by the stuff. Which is why I’m not going to dive into the world of it at all and focus on one place where I think it a huge waste of time.

Music.

I go into a little bit in some of my recent roundups, but this time I am going to approach it with the same question from the title: what’s the point? It will be a listener’s perspective as I don’t work in the industry, nor does my composition from my school years qualify me as a musician, so I can’t comment on what it’s like within the industry itself. However, I don’t think that difference matters much, and I hope this post will explain why.

The news recently has been a mixed bag: Mozart AI has received funding for its AI generative music tool, Deezer has released an AI generated music detection tool, Sony is culling “deepfake songs” from streaming services, and the British government has backed down from allowing AI companies to be granted copyright to British music. And I feel that the overarching question of WHY we are actually doing this is missing from so much of the coverage.

So, let’s pose the question again: what’s the point?

Gen AI

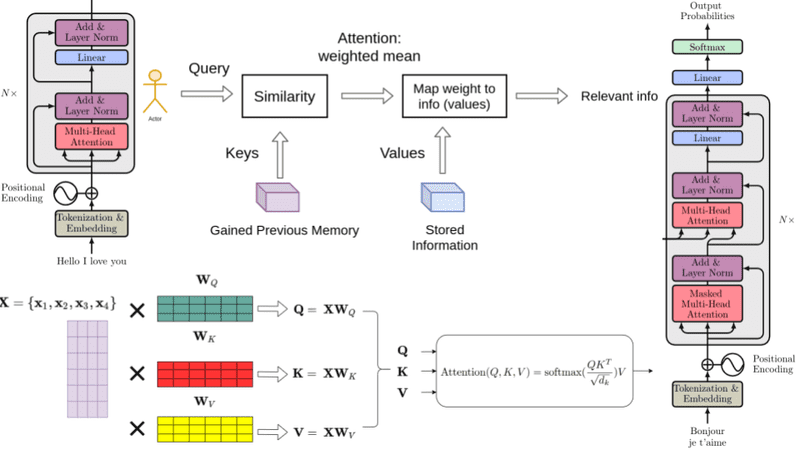

To start, let’s talk a bit about what generative AI is, and how it works (from a very high level).

I have a degree in data science and studied language models during my time at university so have a relatively educated grasp of how the technology works. I’m not going to dive into the technicalities as there’s enough already online about the nitty gritty of it, but an overview is called for here.

Generative artificial intelligence is a category of machine learning models that produce an output based on the data they have been trained with. By passing an obscene amount of data through these models, they can “learn” a probabilistic model of the data they have been trained with.

“Learning”, in this context, is where the model takes a “token” of the data (say, a word), and builds a map of all the connecting words that precede and follow it and their respective probabilities of how likely that connecting word is to precede or follow it.

For example, you could train a model on all the words in fairy tales. Then, looking at the word “upon”, the words “once” and “a” are going to have relatively high probabilities given that a lot of fairy tales start with “once upon a time”.

With this trained model, you can then give it an input, and it will use the input to then generate a response based on the likelihoods of the words that follow that input.

This is the “generative” part of generative AI. It creates output based on the probabilistic representation it has of the training data it has been supplied with. And these tokens don’t have to be words, and can be made to represent anything like images and sound. The premise is the same.

And before anyone comes for me, this is deliberately an incredibly simple and generalised description of the technology. There’s loads more to this and the models actually generate much more complex probability representations between all the tokens it sees in the training data. The models build probabilistic mappings between words, the sentences they’re in, the paragraphs that follow, and the tone of voice that the word is written in. The mathematics behind these models are genuinely quite impressive.

But for this post, this explanation is as much as I’m going to give as it highlights the main point I want to make with this.

It’s all probability.

There’s no actual “knowledge” in these models (relative to human knowledge), it’s all probabilistic representations of the data that those models have been trained with. And then the outputs are just what is likely to be the answer to the given input. To put it bluntly, it’s a guessing machine.

Which is often why I find the terms machine “learning” and “intelligence” can be a bit of a misnomer for what’s actually going on because it suggests that we can equate what this tech does with human intelligence and learning processes. But I would argue that they’re not the same: would you rather hire a human that “knows” a given speciality or someone that is just really good at “guessing” what someone with the speciality would output?

And with these models being trained on so much content that humans have written, of course it’s going to be very good at emulating what humans would expect to hear given an input a human has given it.

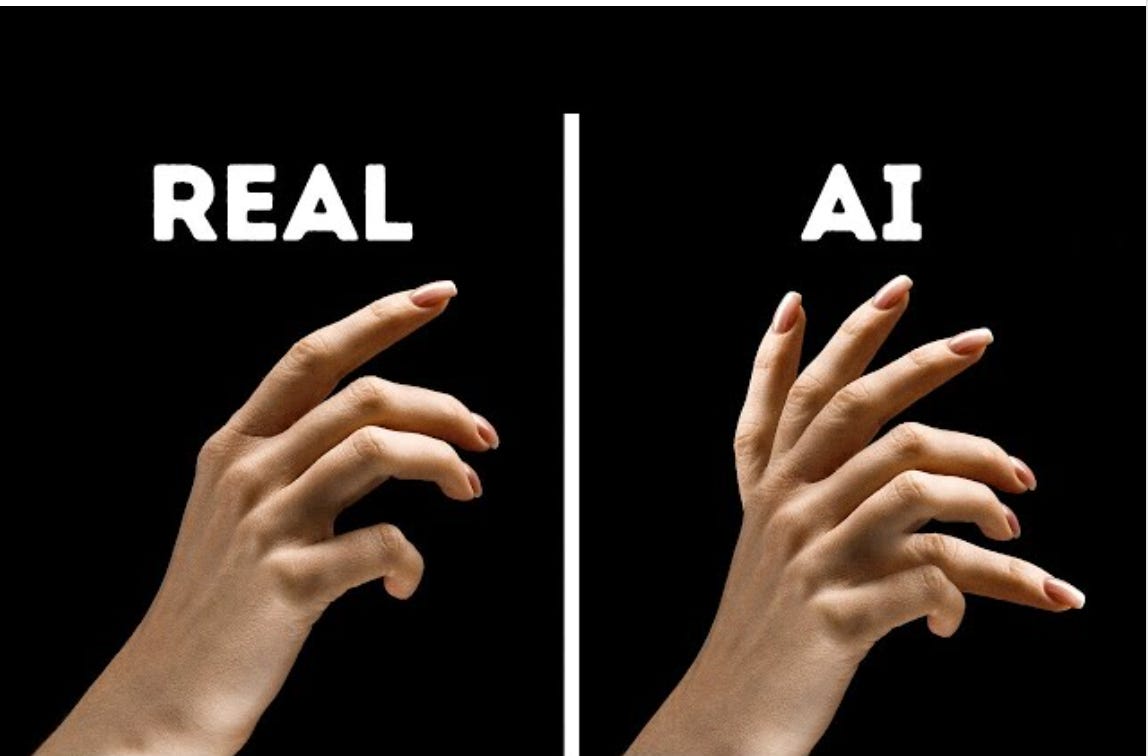

However, with all probabilities, it’s sometimes wrong. And this shows itself in the form of “hallucinations” or where the model predicts an output that is not what was expected. This is most obvious in the infamous pictures of people with more than five fingers.

It all reminds me of the Chinese room thought experiment. Say you’re a non-Chinese speaker in a room and you are passed notes in Chinese under the door. You have a computer that tells you, in your native language, step by step instructions on how to answer these notes and what Chinese characters to write down and pass back under the door. Your notes are the perfect answer to the note that you were given initially.

Do you understand Chinese in this experiment or are you just following step by step instructions on what to write given an input?

And now apply this to generative AI, does it actually “understand” what you’re telling it, or does it just use step by step probabilistic sequences to provide an answer that it assumes the user expects?

Pros Of The Tech

I wouldn’t be surprised if you would assume, following the description, that this technology has few general use cases. However, it most definitely does. Most notably in the world of technology itself.

Lots of tools (like Claude Code and Github Copilot) are trained on huge amounts of code data. Github especially trained its models controversially on all the code that has been stored on its site without getting permission from the codes’ uploaders. So these models have built vast and detailed probabilistic representations of code, their contexts, and their engineering patterns.

This can then be used to generate code that is likely to follow a given input and that’s quite useful as an engineer, as writing software tends to follow common implementation patterns so it was already highly likely that you were going to write that anyway.

This has been a huge development for the software engineering industry as it means that engineers can write code much faster than they could already. Of course, there’s the question of hallucinations, and so care must be taken to ensure the output code is actually what is required. But I often use these tools in my day to day to sketch out proof of concept ideas much faster than I would have previously needed to as I can trust that the models have seen enough proof of concepts of similar types in its training data that it can more reliably output a proof of concept that would suit my specific use case.

And this ability to output a response given a particular use case in a field of common patterns such as software development is really powerful as it means you can save a lot of time letting the computer do work that may have been long and boring before. Like software testing.

Another example of this technology being used well is in the field of software security. These models can take an input of all the code of a given system and can output where the security flaws are likely to be. This gives security researchers a step up in defending systems against attackers as, previously, many of the issues would have had to be found by humans and missing one could result in a successful attack against the system. This could of course be used by attackers as well to the opposite effect and allow them to find similar security holes in systems to exploit. Cybernews on YouTube has an excellent video of this genuinely happening with Anthropic’s Claude model.

So, there’s no surprise that generative AI is mostly built and used by tech people as that’s where these models are most effective.

However, there’s one thing to note about the world of tech and that’s that many people represent the industry by ONLY the output of the people involved. It’s often very tempting to quantify the output of a software engineer by the lines of code that they write. After all, that’s what they’re there for right? Building software. You could naively say one bricklayer is better than another because they’re faster at laying bricks. The same could be said of software engineering, but you would be ignoring so many other factors like infrastructure knowledge, stakeholder familiarity, and codebase quirks, all of which are key to developing good software.

This coupled with how quantifiable other aspects of the tech industry are like number of customers, ad revenue, and profit margins; means that the industry often views itself as the product of individual outputs. And this can then lead those to assume that other industries are similar in that regard and drive tech people to then build generative AI solutions to other industries.

Like music.

Generative AI In The Music Scene

So, where how could you use this technology?

To Listen To

If we were to look at music from a really high level perspective, there are some similarities to the tech industry here.

A musician produces songs, the labels sign musicians with songs, songs are uploaded to streaming platforms, we listen to songs on these platforms, and charts rank the songs. It’s all songs.

So, if this is what the music industry is, then lowering the cost of producing the songs is a no-brainer for anyone looking to maximise the profit made behind songs by having these models produce them. You can cut costs on musicians and the time taken to produce songs and the staff required to maintain a roster of musicians and the recording studios they require.

But is this all that music is?

Because if it was just about songs, why is it that companies are driven to create AI generated profiles of the fake artists behind the songs? Would that not imply that music is MORE than just songs?

Take Xania Monet, for example, an AI generated “musician” created by Telisha “Nikki” Jones. Her top song How Was I Supposed To Know has 13 million streams on Spotify at the time of writing which is an impressive feat for any artist.

But what does it say that, for a tool that focusses so heavily on pure output, that an “artist” was created behind the music to give it a face? Because once you create a “person” behind the music, then you could argue that it’s now no longer about the songs and more about the person behind it. And their music is the way to emotionally connect with them.

But there’s no one to connect with.

So, you could only imagine that the listener, eventually, will want to connect to the person behind the music. But when there’s no one there, then who are they going to connect with? Because isn’t that what art is, connection via a creative medium? I remember when I first heard Lose You To Love Me by Bombay Bicycle Club at Lost Village 2021 and, to this day, it’s a very personal connection I have with the artists. Would it have been the same love for the song had there been no one there to connect with? Probably not.

The other side of this, is the concerning rise of right-wing AI generated accounts that have started popping up, some affiliated directly with political parties. It adds this other layer of discomfort to this technology that the only people that seem to be using it for music are those with seemingly deliberately malicious intent.

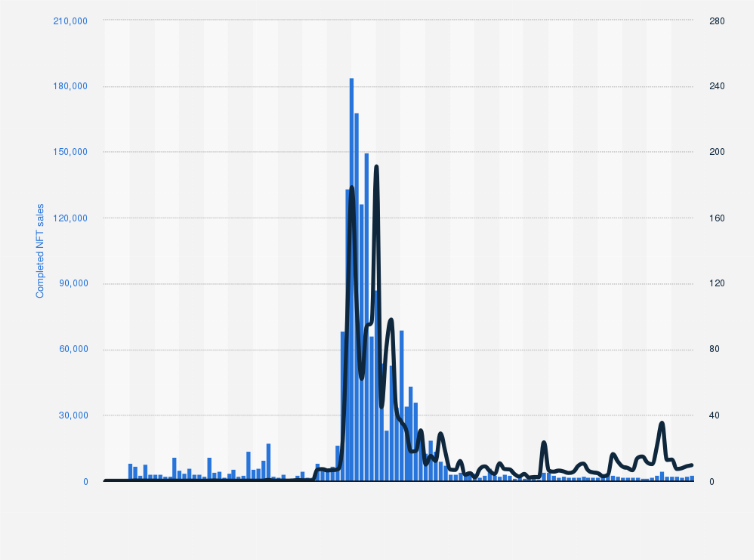

And this begs the question, what if all the streams that these artists are getting now are only short term hype because of the novelty of such technology? Once the technology becomes “yet another thing that tech can do” and the media hype cycle ends, won’t people get bored of this lack of connection and move back to human made music? This is a familiar cycle we all witnessed with NFTs and the metaverse (which Meta is shutting down permanently soon), so what if AI generated music for human listening is just a short term fad?

Well I imagine it wouldn’t be if the music was genuinely something that people enjoyed listening to. Let’s take this Xania Monet again and give her (its?) top track another listen to.

Well, it sounds like shit.

The vocals don’t sound real, the piano riff is weirdly crunchy, the melody is nothing novel, and even the “artist” itself looks weirdly wrong. It doesn’t feel right. The uncanny valley of music.

Drew Meadows is another such “artist” and it’s even more obvious on his Spotify profile and his songs also sound like shit.

And here’s another demo I found on Twitter about an open-source model that can generate music. It also sounds like shit. The individual stems lack any kind of personality, the melody is generic, the timbre sounds artificial, and none of it really seems to gel together.

Go on and give the Suno AI homepage a browser and try out the demos on there. None of them sound particularly good, it’s all generic nonsense with voices that sound off. And if you ask me, this is all kind of expected with how the models are built with the aggregated amalgamation of all its training data. It will ONLY ever produce generic outputs, as its internal probabilities are only ever going to tend toward the absolute average. And average in music is generic.

So, what’s the point?

To Parody

“Parody!” you may hear someone say at this point. As previously stated, the models are good at taking an expected output and factoring in a given context that’s provided. This can be used to a relatively comedic effect of inserting more interesting vocals into songs.

The AI Homer Soulseek flood of 2026 is a good example of this, where the file sharing platform Soulseek was somehow flooded by a single user uploading thousands upon thousands of tracks that had an AI generated Homer from the Simpsons voice instead of the original vocalist. You can picture the user now, desperately searching for that one track they really want only to find out it has Homer’s voice instead. I can’t lie, I find that pretty funny.

For all of about a minute.

Then reality sinks in, and the user keeps searching, only to find more and more Homer AI vocals on the tracks that they want to find and this inevitably becomes this frustrating rage inducing loop of the user losing trust in the discovered songs. So the original parody is lost in a sea of anger as you start to understand that your search has gotten that much harder because any given user can flood the entire system with essentially garbage all at the click of a button.

Gabi Belle, in her aforementioned overview of AI generated music, touches on this topic and makes a very good point: the whole idea of parody is now boring if you can do it at the click of a button. The entire point is to learn music, the instruments, the theory and then apply a given nuance in such a creative way that it becomes entertaining. Weird Al Yankovic is one of the legends of parody, and rightly so, because he uses music and lyrics in such a way that has me still singing “my bologna” whenever The Knack comes on.

The absolute pinnacle of modern musical parody, if you ask me, is the melodica cover of the Jurassic Park theme that caused such a storm when it came out 14 years ago and is still quoted in memes today. Its vague backstory has you questioning whether it was intended to be an earnest performance and that adds a comedic layer to the performance that is missed when there’s no effort required in making it. A click of a button is inherently flippant.

To Have On In The Background

The final use case of which I can conceivably imagine is the world of royalty free background music. Because there’s no “human” involved, then it could be argued that there’s no copyright on the music so can be used royalty free in monetisable content.

But is it truly royalty free?

These models need to be trained on real, human-made music and will definitely have some form of copyright required to do so. You are profiteering off of the backs of unpaid musicians by training these models so you must have some form of copyright to do so. The lawsuits are still ongoing and are likely for a while due to how different the scenario now is with this technology, but there is 100% going to be some form of legal requirement for copyright to even train these models. So it’s not technically royalty free then.

And then, this completely ignores the fact that royalty free music already exists! Any avid YouTube binger like myself is more than familiar with Kevin Macleod, whose royalty free tunes soundtracked so much of the platform’s early days and is still used in videos to this day. And he’s just one of many artists out there who are happy to give it away for free. If this music is destined to be used in the background of the true content you want to monetise, why would you use legally questionable AI generated music that sounds like shit?

So, please tell me, where is the use case?

The Reception

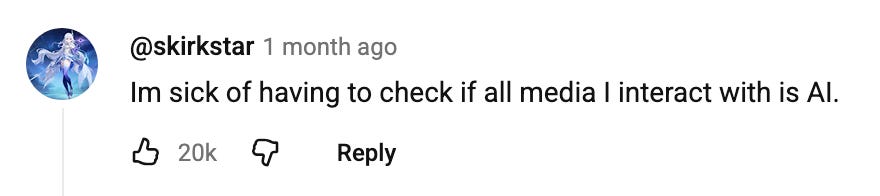

It’s getting increasingly obvious that many online are getting tired of the content.

The top comment of Belle’s video is someone lamenting about the extra effort required nowadays to check whether something they see or hear online is AI generated and many other comments tend to agree. Jacksfilms also demonstrates the experience of discovering that something online is AI generated and it’s not a positive one. Logging into Twitter is the same, and my feed is aflame with people dunking on the “clanker brained gooning over AI slop”.

And because it’s so easy to produce, it’s getting exhausting to have to check because any content anywhere nowadays can be AI generated. It adds yet another frustration to the growing enshittification of social media because, what was once a fun way of sharing interesting content with your connections online, is now just a slog of having to check whether something you see is AI generated or not and the eventual disappointment when you realise it is.

Social media (despite its flaws) is arguably one of the most incredible products of the tech industry from the past decade and a half that allows people to connect with each other across the world and is something I rely on to remain in touch with many of my friends from home. So, it could be argued that this content flooding our feeds is the cannibalisation of the product by the tech industry by making normal people less interested in using it. They’re digging their own grave.

And this is being reflected in business, many businesses have started hiring for “storytellers” to differentiate themselves from the slop by having a human write text that doesn’t sound like AI generated output (how this differs from a good copywriter I don’t know).

In music too, Bandcamp has outright banned AI generated content, and Deezer has released this AI generated music detection tool to help others identify the slop. It’s clear that both companies see this as what the market desires and view the tech as threats to their business models.

Spotify, in its journey to ruin its reputation further, has yet to follow down this path, especially after the controversies surrounding their “curated” playlists for background listening that are claimed to be filled with AI generated trash.

Outside of the music scene, others are beginning to also call out the bullshit that these AI companies are pushing like the recent Delve controversy about them falsely issuing out compliancy certifications which, for other such nerds like me, is a HUGE issue for which the law can fuck you up. We’re talking about healthcare compliancy and the necessary privacy implementations to ensure that confidential patient data is kept safe and secure. Serious stuff!

Back to the music though, is there anyone that actually listens to AI generated music?

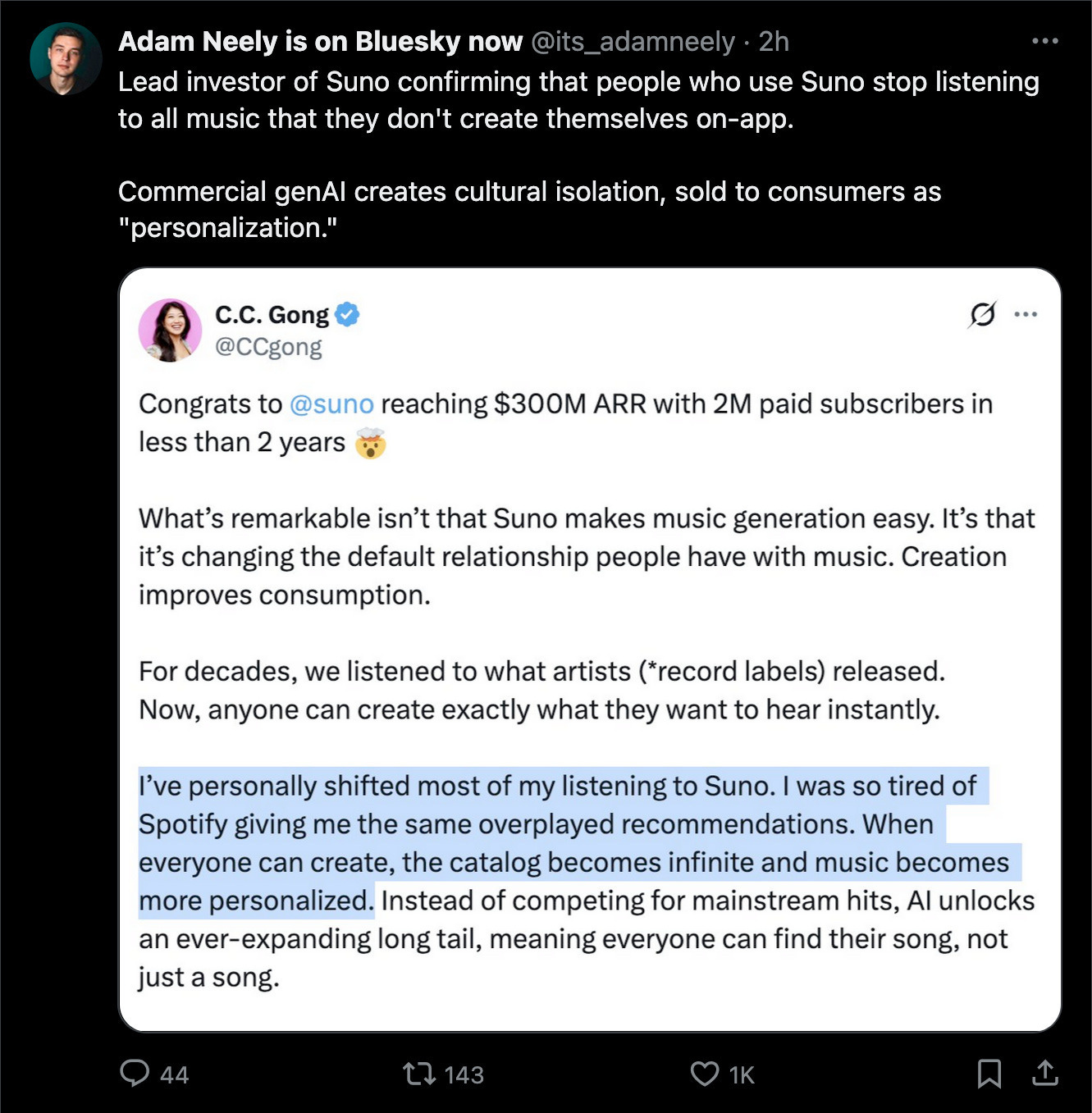

Well, apparently a lead investor in Suno AI does:

What does it say about a business when their best customer is themself?

That’s like paying yourself to clean your own house and claim you made money, it’s not how business works. And the sheer audacity to proudly announce that you don’t value music beyond anything that doesn’t suit your immediate need is a remarkably shallow view of art as a concept. That’s openly admitting that you never want to feel challenged by a creative medium. Viewing music as something that only serves you.

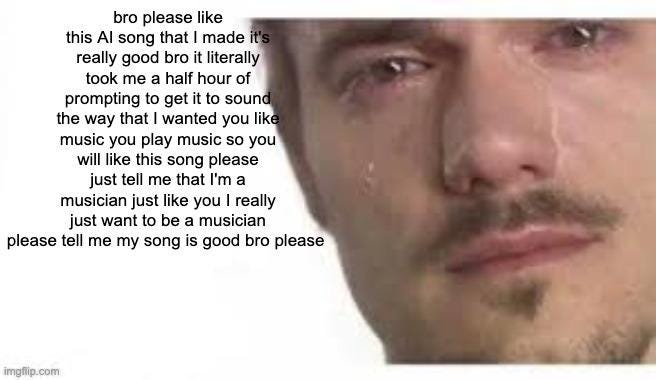

It’s so divorced from the dreams of anyone creative.

The Big Picture

You can’t ignore the pretentiousness of hating on it all though. To claim that I know better than so many people building and using this technology is a bold stance to take.

After all, I used to feel such marvel at this technology when I was doing my data science degree, what’s to stop others in dreaming of a better world with it. I remember working on this translation model that we were using in groups to translate English to Japanese and back to English again. I thought it was crazy that these boxes of silicon could do that.

But I began to feel justified in my belief when I started learning about the actual business of generative AI and the finances behind it. If you’re anyone who has been following any sort of tech news in these past few years, you would know of what I talk about when I mention the AI Bubble.

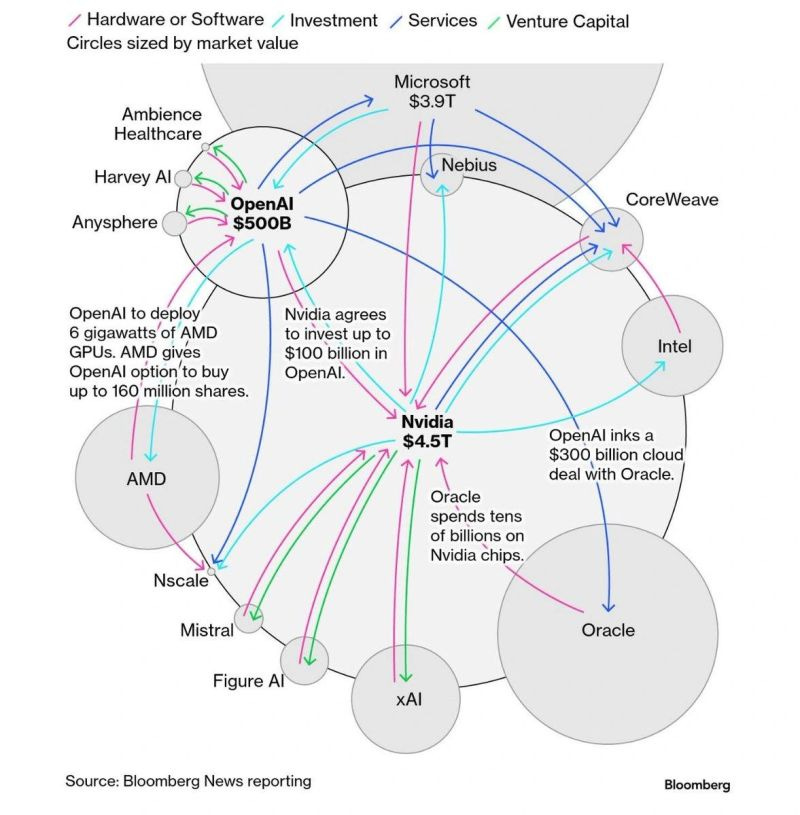

The circular financing deals that power this monster of an industry. How Nvidia, Microsoft, OpenAI, Oracle, and all the data center companies are all pumping money into each other to raise the stock price and drive more money into the industry.

We all know it’s rotting, and we all know something bad is going to happen out of the other end of it. We’ve seen it before in the mayhem that has been the last 20 years of on-and-off financial crises that are decimating the financial future of younger generations. Ed Zitron covers the industry in detail and goes through the financials with such a fine toothed comb to lay bare just how bad some of these deals can be.

For one, Nvidia, the giant behind most of this boom, has begun financing companies on the condition that they buy Nvidia tech which smells an awful lot like what Enron did back in the day with its creative accounting tricks. But don’t worry, guys, they’re not Enron!

The industry in general feels like they’re pushing this tech as the future, whilst ignoring any evidence to the contrary for the present. They’re not taking stock of what people want right now.

The tech world has basically been spending A LOT of money to build kinda useful things over the past 20 years. Almost unnecessarily, as the solutions they’ve delivered are comparably small considering the cost. Take ride hailing apps, for example. At the end of the day, they’re just allowing people to request a ride from a person in a car remotely. As much as there can be some complexities to the system, the premise is relatively simple. Uber’s total investment totals $13 billion. Does it cost that much to build an app that is physically not much more complex than ringing someone and requesting a ride?

Well, because the output is actually quite useful and the benefits have improved things like pricing, rider safety, and convenience, many see the cost as necessary to reap the rewards.

Wouldn’t the AI companies then be incentivised to really sell the potential future of this tech then instead of trying to deliver desired results today? If tech has always delivered “what was eventually needed”, then doesn’t that excuse companies to pursue more nefarious outcomes because we believe that this industry has never let us down before? Didn’t this happen exactly like this with NFTs and the metaverse?

The way that this tech has delighted the LinkedIn type is only expected. Those that see this tech as costs being saved with innovative solutions you think you have done cleverly because the AI has had you believe that you were being oh so clever about it. These AI tools are inherently designed to be positive to you, as that means you’re more likely to be drawn to it as it feels more like a friend due its friendly human output.

Weights can be adjusted in this tech, and you can manipulate the mathematics to result in a more positive output that pleases whoever is using it. And this, in turn, improves the likelihood you’re going to talk about its benefits to others. Because it never questions your belief system. You don’t need to go far to start hearing the stories of those being driven to do dangerous acts due involvement with generative AI.

There’s such a cost of this technology to society, and I am struggling to see the long term net benefits. On top of the horror of the aforementioned self harm, there’s the environmental cost of data centers, the aforementioned poisoning of global financial systems, and the promised unemployment crisis of replaced jobs (which is somehow sold as a good thing). People have started even outsourcing their thinking to this technology with many online joking about how they can’t live without the tech any more. And we’re meant to be excited by this?

As a tech worker myself, it’s definitely made my job faster though. Sometimes overwhelmingly so. But it’s definitely improved my productivity. Even if it has dimmed the pride I so often feel when completing a well done job as it’s not really all due to me now any more, is it?

Finishing Off

Where were we again? Oh yeah, music.

It’s true that it’s tempting to see music solely through the output of musicians and ignore the infrastructure encompassing them like the music schools, instruments, online communities, and the venues like concert halls and clubs. Without the armada of workers running labels, managing artists, promoting events, and managing venues. And all of their staff in the background. It’s tempting to only see the scene through the songs themselves.

And if you do, then you would believe that generative AI is exactly what we need.

But it doesn’t seem like that’s the case at all. So, why are we doing this?

What’s the point?

I’m going to end this on a gorgeous quote by Kate Anderson from Aardman, the Bristol based animation studio. In their latest video with the Wall Street Journal, Anderson ends the video with the following:

“It’s all about the soul, the human input. And I really don’t understand why you would want to, kind of, have a machine to do that”